What function does the Reward Model (RM) serve in the Reinforcement Learning from Human Feedback (RLHF) process?

Answer

It predicts which response a human would prefer, acting as a proxy for the human judge.

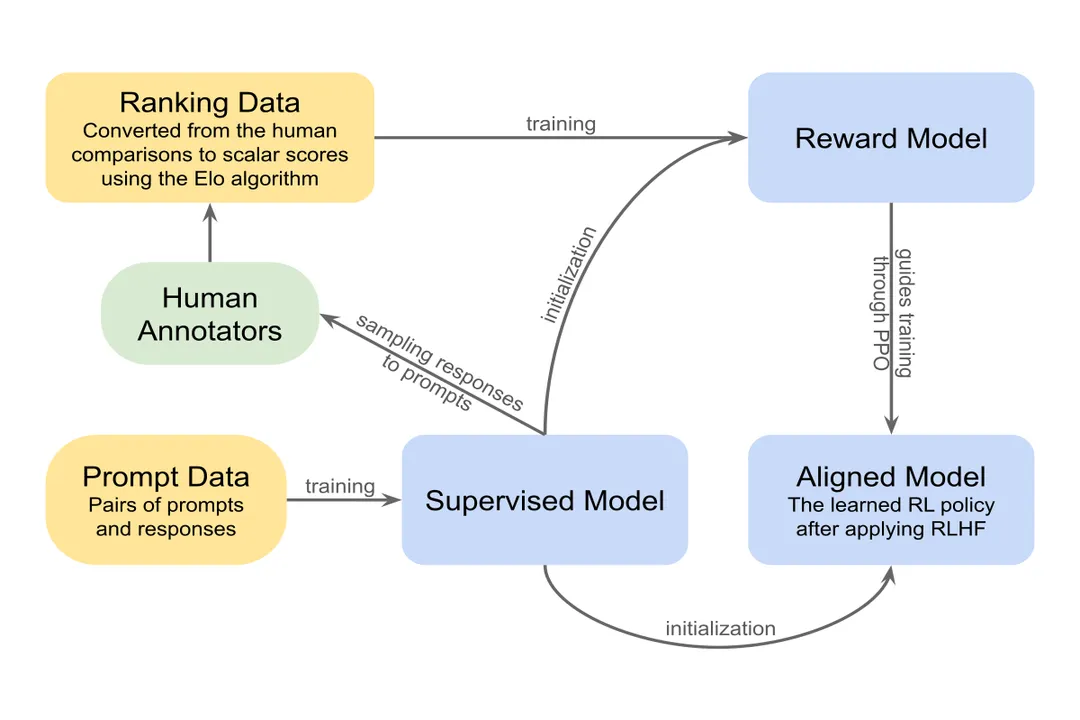

The Reward Model (RM) is a critical component trained specifically during the RLHF pipeline to bypass the difficulty of hand-engineering complex, subjective reward functions. Human labelers collect comparison data by ranking various model outputs for the same prompt. This human preference data is then used to train the separate Reward Model. Once trained, the RM functions as a proxy for the human judge, generating a scalar score that estimates the relative goodness or preference level of a generated response. This score is then used as the reward signal when fine-tuning the original large language model using reinforcement learning algorithms like PPO.

Related Questions

Who established Reinforcement Learning as a distinct field with their seminal textbook?What function does the Reward Model (RM) serve in the Reinforcement Learning from Human Feedback (RLHF) process?What specific level did EACL 2006 research focus RL on for learning optimal dialogue strategies?What essential concept must an RL agent learn to maximize over a sequence of interactions?What major award did Richard S. Sutton and Andrew G. Barto receive in 2023?Why is Temporal-Difference (TD) learning considered significant in RL research?Regarding LLM dialogue agents, what characteristic defines their action space?Which reinforcement learning algorithm is typically utilized during the RL Fine-Tuning stage of RLHF?How does the objective learned via RL in dialogue differ from supervised learning next-token prediction?To what mathematical concept pioneered by Richard Bellman does RL owe its structural foundation for sequential decisions?