What is the history of single board microcontroller?

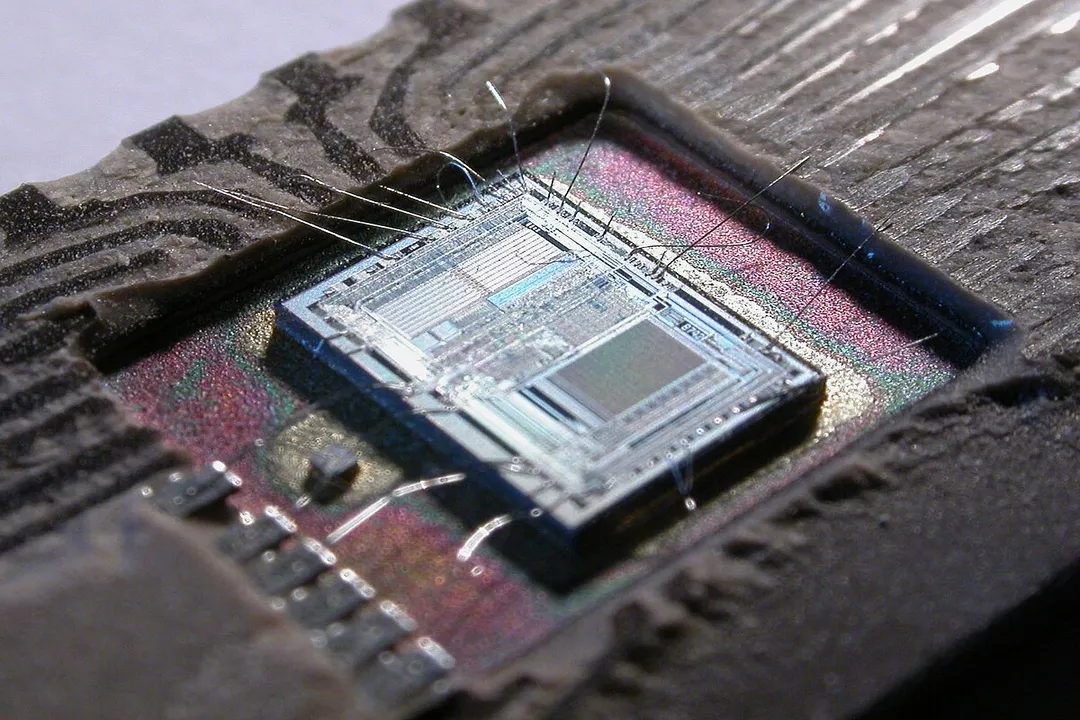

The story of the single-board microcontroller (MCU) isn't a straight line from one invention to the next; rather, it’s a winding path that merges the intense miniaturization efforts of the calculator industry with the growing demand for dedicated, low-cost processing power in everyday objects. [2][3] To understand the MCU, one must first distinguish it from its close relative, the microprocessor (MPU). A microprocessor contains only the Central Processing Unit (CPU) core and requires external chips for memory, input/output (I/O) ports, and other support functions to operate. [4][8] The microcontroller, in contrast, is designed for embedded control and integrates the CPU, volatile memory (RAM), non-volatile memory (ROM or Flash), and various I/O peripherals—like timers and communication interfaces—all onto a single integrated circuit (IC). [4][5][8]

# Calculator Roots

The earliest steps toward the integrated controller weren't driven by industrial automation but by the consumer electronics boom, specifically the digital calculator. [3] Before the true MCU existed, designers relied on complex combinations of dedicated logic chips to handle sequential operations. [3] The drive to reduce the size and cost of these devices forced semiconductor manufacturers to condense more functions onto fewer chips. [3] Companies like Texas Instruments were crucial in pioneering the concept of putting significant processing power into very small packages, laying the groundwork for what would become general-purpose controllers. [9] These early calculator chips, while specialized, demonstrated the economic viability of highly integrated digital control systems. [3]

# Integrated Design Emerges

The transition from these highly specialized calculator ICs to what we recognize today as a microcontroller involved consolidating the key ingredients: processing logic, storage, and the means to interact with the outside world. [2] An MCU needs on-chip memory for the program (firmware) and operational data, along with built-in interfaces to handle tasks like reading a button press or driving a display segment. [4][5]

The key breakthrough was achieving this integration affordably and reliably. Early microcontrollers were not just smaller versions of mainframes; they were purpose-built to be highly efficient for specific control tasks, often sacrificing raw speed for low power consumption and minimal silicon area. [4] This efficiency meant they could be embedded inside appliances, automobiles, and industrial machinery where cost and power budgets were extremely tight. [4]

# Board Level Differentiation

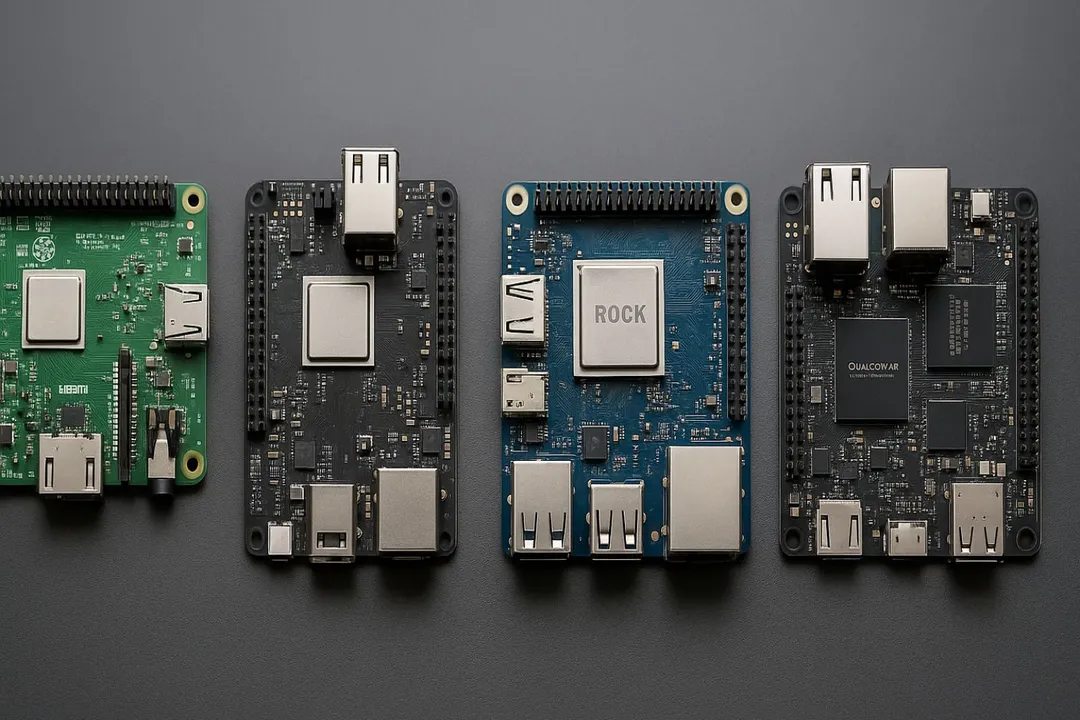

When discussing the history of the single-board microcontroller, it is important to separate this concept from the history of the single-board computer (SBC). [6] Early SBCs, such as those championed by hobbyists, often featured microprocessors and aimed to provide a complete, functional computing environment, perhaps running a small operating system. [1][6] These boards were generally larger, more expensive, and focused on general-purpose computing rather than tight, repetitive real-time control. [7]

While early SBCs showcased the feasibility of putting an entire system onto one printed circuit board (PCB), the MCU’s primary focus remained on embedding intelligence directly into devices—the entire system might be just the MCU chip itself, perhaps with a handful of external resistors or capacitors. [6] The existence of these accessible, complete SBCs did, however, influence the later accessibility of MCUs. It taught engineers and enthusiasts about compact system packaging, which later translated into user-friendly MCU development platforms that packaged the MCU onto a simple breakout board. [1]

One interesting development often overlooked is the cost/power curve. Early general-purpose microprocessors (like those used in early SBCs) were expensive and power-hungry, making them unsuitable for embedded tasks. The success of the MCU lies in its initial low cost and minimal power draw, allowing it to be deployed nearly everywhere, from washing machines to simple toys, a market segment MPU technology couldn't serve economically for decades. [3][4]

# Architectural Standardization

As the technology matured through the late 20th century, different semiconductor houses established their own architectures—be it Harvard or von Neumann structures—but the common denominator remained the on-chip integration of essential elements. [5] The inclusion of on-chip timers and analog-to-digital converters (ADCs) became defining features, moving the chip far beyond simple counting and logic gates into genuine sensing and interaction capabilities. [4]

This standardization of function—a CPU, a fixed amount of RAM, non-volatile storage, and necessary peripherals—allowed software development tools to become more predictable across different hardware vendors. [5] Programmers learned to write firmware that directly manipulated these standardized hardware registers, defining how the device behaved. [5]

While early SBCs were often about installing operating systems and loading software from external drives, the MCU world settled on in-system programming where the compiled firmware was flashed directly into the chip’s permanent memory. [6] This difference in software deployment method strongly cemented the MCU’s role as a dedicated appliance controller rather than a general computing platform. [6]

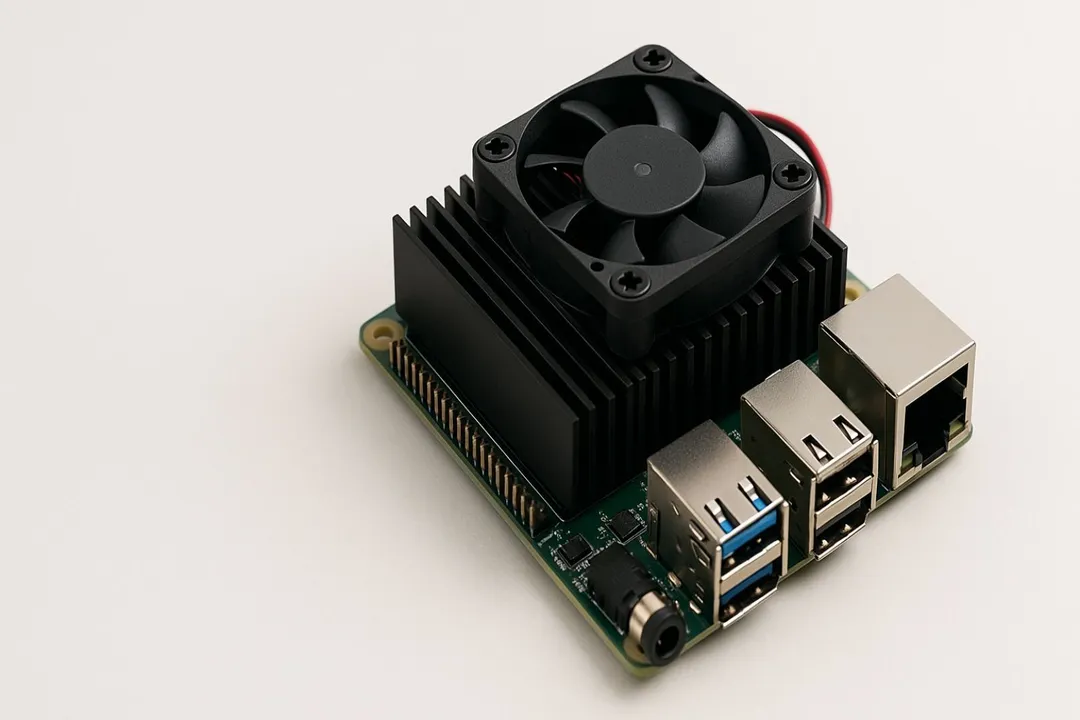

The evolution of the single-board concept didn't stop at the integrated circuit level; it later manifested in highly popular hobbyist and educational tools. These modern development boards often pair a powerful MCU with a simple USB interface and easily accessible pins, effectively acting as a single-board controller environment. [6] This modern form factor brings the power of an integrated controller onto a simple PCB, often running bare-metal code or a lightweight real-time operating system, making the development experience more akin to that of an early hobbyist SBC, but focused entirely on control tasks. [1][6]

The crucial takeaway from tracing this history is that the microcontroller succeeded by being less than a computer—by shedding the external complexity and maximizing efficiency for control—while the single-board computer succeeded by aiming to be a complete computer in a tiny footprint. [7] Both paths, however, eventually converged in accessible, low-cost development platforms that define the current landscape of embedded electronics. [1]

Related Questions

#Citations

Then and now: a brief history of single board computers

History of Microcontrollers | Toshiba Electronic Devices & Storage ...

A History of Early Microcontrollers, Part 1: Calculator Chips Came First

[PDF] A Brief History of Single Board Computers

[PDF] Introduction to the Microcontroller - Millersville University

Development Board History and Differences from Single ... - Utmel

Single-Board Computers: Hidden Power Features Most Users Miss

Microcontroller - Wikipedia

Little Chips from Texas : The Early History of the Microcontroller Part 1